Software Redefined is Wantware

Unlock digital innovation with MindAptiv’s wantware, surpassing traditional software by eliminating the need for programming languages. Our unique, codeless solutions revolutionize software for general and AI operations across cloud, on-premises, edge, mobile, HPC, and IoT, redefining performance, quality, security, interoperability, efficiency, and more.

Software Redefined is Wantware

Unlock digital innovation with MindAptiv’s wantware, surpassing traditional software by eliminating the need for programming languages. Our unique, codeless solutions revolutionize software for general and AI operations across cloud, on-premises, edge, mobile, HPC, and IoT, redefining performance, quality, security, interoperability, efficiency, and more.

Software Redefined is Wantware

Unlock digital innovation with MindAptiv’s wantware, surpassing traditional software by eliminating the need for programming languages. Our unique, codeless solutions revolutionize software for general and AI operations across cloud, on-premises, edge, mobile, HPC, and IoT, redefining performance, quality, security, interoperability, efficiency, and more.

Essence®: Rearchitected for Codeless, Intent-Driven Computing

In the fast-paced digital world, traditional software development often lacks the agility and security needed to keep up. Essence overcomes these challenges with wantware, a codeless, intent-driven approach that transcends traditional software limitations. Our technology allows features and functionalities to seamlessly integrate and adapt, whether within a single device or across ecosystems. This ensures they are secure, efficient, and responsive to evolving requirements. Essence opens the door to a new era of resilient, accessible digital solutions.

The Heart of Transformation

Essence is a groundbreaking EcoSync platform, reshaping interoperability, security, and adaptability across varied computing environments. Its standout features are:

- An ultra-compact executable under 1MB

- Interoperability on 20 Linux distros in a single executable

- Capable of booting in about 1 millisecond

- Expressed human intent directly translated into machine behaviors

- Enhancing any code with meaning

Redefining Code as Building Blocks

Essence transforms software development by treating code, including complex AI algorithms, as modular; easily combinable elements akin to Lego Bricks. This enables:

- Intuitive assembly of digital solutions

- Breaking down the barriers between human intent and technological execution

- Simplifying the process of combining, understanding, and scaling AI and any other software functionalities

Revolutionary Direct to Component Instructions

Essence eliminates the need for virtualization software with its unique capability to generate and send automatically tuned, synced, scaled, and highly parallelized machine instructions directly to components across a network.

Empowering Digital Innovation

Essence represents a leap towards a future where digital innovation is unfettered by the constraints of traditional software development. By simplifying the creation, deployment, and interaction of digital solutions, Essence empowers individuals and organizations to unlock their creative potential and address complex challenges with ease. MindAptiv’s Essence is paving the way for a new era of digital solutions that are secure, adaptable, and aligned with human intent.

Beyond Linux: A Cross-Ecosystem Vision

Our vision extends beyond Essence for Linux. Essence will be available across a variety of platforms:

Windows, Android, macOS, iOS, tvOS, WatchOS, WebOS, and more.

Cross-platform parity ensures that Essence enables wantware products to operate seamlessly across ecosystems without any need for modifications, managing interoperability on-the-fly for a consistent user experience across all devices and platforms.

Jump On Board

Sign up for the Pilot Program and engage with us in a pioneering endeavor as we explore the myriad possibilities of creating, utilizing, and trading Aptivs with wantware through our exclusive Pilot Program.

Essence®: Rearchitected for Codeless, Intent-Driven Computing

In the fast-paced digital world, traditional software development often lacks the agility and security needed to keep up. Essence overcomes these challenges with wantware, a codeless, intent-driven approach that transcends traditional software limitations. Our technology allows features and functionalities to seamlessly integrate and adapt, whether within a single device or across ecosystems. This ensures they are secure, efficient, and responsive to evolving requirements. Essence opens the door to a new era of resilient, accessible digital solutions.

The Heart of Transformation

Essence is a groundbreaking EcoSync platform, reshaping interoperability, security, and adaptability across varied computing environments. Its standout features are:

- An ultra-compact executable under 1MB

- Interoperability on 20 Linux distros in a single executable

- Capable of booting in about 1 millisecond

- Expressed human intent directly translated into machine behaviors

- Enhancing any code with meaning

Redefining Code as Building Blocks

Essence transforms software development by treating code, including complex AI algorithms, as modular; easily combinable elements akin to Lego Bricks. This enables:

- Intuitive assembly of digital solutions

- Breaking down the barriers between human intent and technological execution

- Simplifying the process of combining, understanding, and scaling AI and any other software functionalities

Revolutionary Direct to Component Instructions

Essence eliminates the need for virtualization software with its unique capability to generate and send automatically tuned, synced, scaled, and highly parallelized machine instructions directly to components across a network.

Moreover, Essence is equipped to generate instructions consistent with any required protocols in real-time, ensuring adaptability and efficiency in the face of evolving technological demands and standards, and rising infrastructure costs.

Empowering Digital Innovation

Essence represents a leap towards a future where digital innovation is unfettered by the constraints of traditional software development. By simplifying the creation, deployment, and interaction of digital solutions, Essence empowers individuals and organizations to unlock their creative potential and address complex challenges with ease. MindAptiv’s Essence is paving the way for a new era of digital solutions that are secure, adaptable, and aligned with human intent.

Beyond Linux: A Cross-Ecosystem Vision

Our vision extends beyond Essence for Linux. Essence will be available across a variety of platforms:

Windows, Android, macOS, iOS, tvOS, WatchOS, WebOS, and more.

Cross-platform parity ensures that Essence enables wantware products to operate seamlessly across ecosystems without any need for modifications, managing interoperability on-the-fly for a consistent user experience across all devices and platforms.

Jump On Board

Sign up for the Pilot Program and engage with us in a pioneering endeavor as we explore the myriad possibilities of creating, utilizing, and trading Aptivs with wantware through our exclusive Pilot Program.

Essence®: Rearchitected for Codeless, Intent-Driven Computing

In the fast-paced digital world, traditional software development often lacks the agility and security needed to keep up. Essence overcomes these challenges with wantware, a codeless, intent-driven approach that transcends traditional software limitations. Our technology allows features and functionalities to seamlessly integrate and adapt, whether within a single device or across ecosystems. This ensures they are secure, efficient, and responsive to evolving requirements. Essence opens the door to a new era of resilient, accessible digital solutions.

The Heart of Transformation

Essence is a groundbreaking EcoSync platform, reshaping interoperability, security, and adaptability across varied computing environments. Its standout features are:

- An ultra-compact executable under 1MB

- Interoperability on 20 Linux distros in a single executable

- Capable of booting in about 1 millisecond

- Expressed human intent directly translated into machine behaviors

- Enhancing any code with meaning

Redefining Code as Building Blocks

Essence transforms software development by treating code, including complex AI algorithms, as modular; easily combinable elements akin to Lego Bricks. This enables:

- Intuitive assembly of digital solutions

- Breaking down the barriers between human intent and technological execution

- Simplifying the process of combining, understanding, and scaling AI and any other software functionalities

Revolutionary Direct to Component Instructions

Essence eliminates the need for virtualization software with its unique capability to generate and send automatically tuned, synced, scaled, and highly parallelized machine instructions directly to components across a network.

Moreover, Essence is equipped to generate instructions consistent with any required protocols in real-time, ensuring adaptability and efficiency in the face of evolving technological demands and standards, and rising infrastructure costs.

Empowering Digital Innovation

Essence represents a leap towards a future where digital innovation is unfettered by the constraints of traditional software development. By simplifying the creation, deployment, and interaction of digital solutions, Essence empowers individuals and organizations to unlock their creative potential and address complex challenges with ease. MindAptiv’s Essence is paving the way for a new era of digital solutions that are secure, adaptable, and aligned with human intent.

Beyond Linux: A Cross-Ecosystem Vision

Our vision extends beyond Essence for Linux. Essence will be available across a variety of platforms:

Windows, Android, macOS, iOS, tvOS, WatchOS, WebOS, and more.

Cross-platform parity ensures that Essence enables wantware products to operate seamlessly across ecosystems without any need for modifications, managing interoperability on-the-fly for a consistent user experience across all devices and platforms.

Jump On Board

Sign up for the Pilot Program and engage with us in a pioneering endeavor as we explore the myriad possibilities of creating, utilizing, and trading Aptivs with wantware through our exclusive Pilot Program.

What New Products Will be Powered by Wantware?

Introducing illumin8 Media: A New Horizon in Digital Experiences

MindAptiv is excited to announce the upcoming launch of illumin8 Media, a groundbreaking initiative set to redefine the creation, distribution, and consumption of digital media. Engineered to operate on the innovative new cross-ecosystem framework powered by wantware, illumin8 Media promises to open new horizons in media engagement and monetization. As we approach its anticipated unveiling this year, illumin8 Media stands as the herald of a new generation of products designed to dynamically adapt to both business and customer needs, setting a new standard for digital media platforms.

Be Part of the illumin8 Media Pilot Program

We are on the lookout for innovative partners to join our illumin8 Media Pilot Program. This selective opportunity is for those eager to shape the future of digital media technology. As a participant, you and your digital media organization will gain firsthand experience with illumin8 Media, provide critical insights that will guide its growth, and position your organization at the forefront of a digital media revolution.

For those ready to elevate their media capabilities and influence the future of content technology, we extend this invitation. Apply to our Pilot Program and contribute to the pioneering spirit that drives the world of media to exciting new frontiers.

Pilot Program Advantages:

What New Products Will be Powered by Wantware?

Introducing illumin8 Media: A New Horizon in Digital Experiences

MindAptiv is excited to announce the upcoming launch of illumin8 Media, a groundbreaking initiative set to redefine the creation, distribution, and consumption of digital media. Engineered to operate on the innovative new cross-ecosystem framework powered by wantware, illumin8 Media promises to open new horizons in media engagement and monetization. As we approach its anticipated unveiling this year, illumin8 Media stands as the herald of a new generation of products designed to dynamically adapt to both business and customer needs, setting a new standard for digital media platforms.

Be Part of the illumin8 Media Pilot Program

We are on the lookout for innovative partners to join our illumin8 Media Pilot Program. This selective opportunity is for those eager to shape the future of digital media technology. As a participant, you and your digital media organization will gain firsthand experience with illumin8 Media, provide critical insights that will guide its growth, and position your organization at the forefront of a digital media revolution.

For those ready to elevate their media capabilities and influence the future of content technology, we extend this invitation. Apply to our Pilot Program and contribute to the pioneering spirit that drives the world of media to exciting new frontiers.

Pilot Program Advantages:

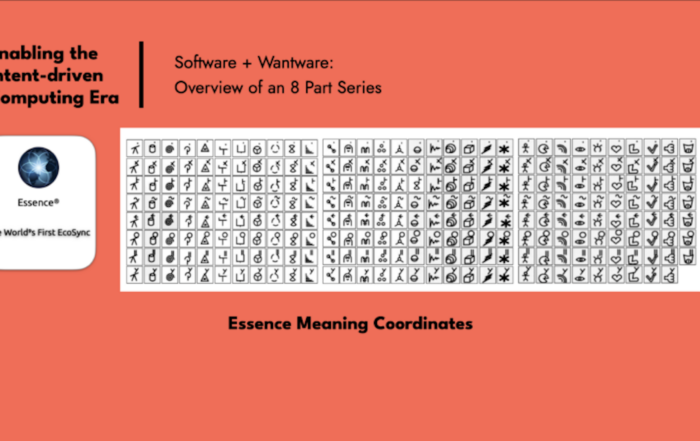

The Foundation Suite

Our foundation suite of Essence products, including Supercell, xSpot, SecuriSync, and Elevate, propels our vision, offering unified solutions that are not only efficient and user-centric but also resilient and adaptable to future digital landscapes. They are made from meaning coordinates, not code, which means they work everywhere Essence is, without modification. Together, they signify a leap towards an innovative, intent-driven computing era, revolutionizing how we interact with digital ecosystems.

The Foundation Suite

Our foundation suite of Essence products, including Supercell, xSpot, SecuriSync, and Elevate, propels our vision, offering unified solutions that are not only efficient and user-centric but also resilient and adaptable to future digital landscapes. They are made from meaning coordinates, not code, which means they work everywhere Essence is, without modification. Together, they signify a leap towards an innovative, intent-driven computing era, revolutionizing how we interact with digital ecosystems.

The Foundation Suite

Our foundation suite of Essence products, including Supercell, xSpot, SecuriSync, and Elevate, propels our vision, offering unified solutions that are not only efficient and user-centric but also resilient and adaptable to future digital landscapes. They are made from meaning coordinates, not code, which means they work everywhere Essence is, without modification. Together, they signify a leap towards an innovative, intent-driven computing era, revolutionizing how we interact with digital ecosystems.